In this issue:

Over the past several months, I have had conversations with senior leaders at several large, well-established supply chain organizations with strong teams responsible for Integrated Business Planning (IBP) and supply chain network design and optimization.

These teams are technically strong. They know how to build models. They are comfortable with large data sets. Many are now incorporating AI tools into their workflows.

But the same concern keeps surfacing across those conversations:

The analytical capability is improving—but the decision-making discipline around it is not keeping pace.

Analysts move quickly to building models without fully defining the business problem. Assumptions are not always surfaced or challenged. Outputs are evaluated mathematically, not operationally. And recommendations are not always translated into real-world implications.

Leaders are concerned about this and are looking for ways to address. I share their concern because I have been in their shoes.

Earlier in my career, across different roles at Coca-Cola, we did not formally teach critical thinking. We learned it through experience and often through mistakes. Three situations shaped how I think about this today.

While working with optimization groups at Coca-Cola North America, we overbuilt capacity for Powerade. The model did exactly what it was supposed to do. The problem was upstream of the model.

We took the demand forecast at face value. At the time, we deferred to the brand teams without interrogating their assumptions. We never asked what was driving the projected volume—whether the competitive dynamics supported it, whether the channel assumptions were realistic, whether pricing and distribution plans were grounded, whether overall market growth would materialize as projected.

The consequence was idle capacity, production lines that were purchased and never installed, write-offs, and a fundamental change to our process. Going forward, brand and supply chain teams were both required to sign off on future business cases. The model was technically correct. The thinking around the model had not been.

Later, within Coca-Cola Supply, we made a network decision to close a plant in Little Rock. On paper, the remaining system had the capacity to absorb the volume. The model said so.

What the model assessed was production capacity based on rated line speeds. What it did not account for was dock and storage capacity at peak, or the practical limitations of standing up a new shift at the receiving plants. Those constraints were real. They were also invisible in the model.

In the short term, we had to source sub optimally from other plants—which directly undermined the business case we had built to justify the closure. The math was right. The operational validation was incomplete.

By the time I led the National Product Support Group, we had evolved. Decisions like the launch of mini cans required cross-functional alignment, scenario-based thinking, and a clear understanding of how demand would actually be generated across channels and routes to market.

We got that one right, not because the model was more sophisticated, but because the discipline around the model was stronger. We had learned, the hard way, to ask the questions the model could not ask for itself.

There is a line I first heard from Chris Janke: "Most of the work is outside the model." He may have learned it from someone else; I don’t know the original source, but it is the framing that has stayed with me. With the advances in data and machine learning we have seen over the past decade, that proportion may be closer to 75 percent today.

We are better than ever at collecting and cleansing large data sets, processing high volumes of information, and identifying mathematical errors. But the most important work still happens outside the model: defining the right business question, building meaningful scenarios, interpreting outputs in real-world terms, and stress-testing the assumptions that drive the recommendation.

Janke captured this precisely in documenting his own experience with a modeling error that illustrated the point. An analyst had validated the math on a labor cost model—everything checked out numerically. But when the output was translated into real-world terms, it implied production workers earning roughly $300,000 per year while working approximately 60 hours total annually. The math was internally consistent. The result was operationally impossible. The question that should have been asked early: does this make sense in the context of how the business actually operates? It was not asked until after the analysis was complete.

The discipline to ask that question is not modeling skill. It is a critical thinking skill.

A common pattern today is that analysts move quickly to building the model. The harder and more important step of defining the business decision before the model is built gets compressed or skipped entirely. The questions that require that step are not complicated, but they take time and engagement to answer well:

These questions are not as clean as coding a model. They require conversations with people who understand the constraints, not just the data. That is part of why they get skipped.

This is where more serious errors occur. Model issues can usually be fixed with more time. Misinterpretation of output leads to bad decisions that are much harder to unwind.

The Powerade and Little Rock situations both illustrate this. In each case, the model was not wrong in any technical sense. What was missing was the translation layer— where someone asks, “what changes on a Tuesday night shift, at Plant B, when demand spikes 12 percent?”

That translation layer does not happen automatically. It has to be built into how teams work. And it is exactly the discipline that gets squeezed when organizations reward speed and analytical sophistication above everything else.

Critical thinking in supply chain is not skepticism for its own sake, and it is not a soft skill that sits alongside the analytical work. It is a discipline applied to decisions and not just to models. The word itself points to what we mean: kritikos, the Greek root, means skilled in judging, able to discern*. That is the right definition for our purposes.

It means asking whether the right question is being answered before investing in answering it well. It means making the assumptions that drive a recommendation visible and testable. It means translating analytical output into operational consequence: what actually changes, for whom, at what cost, and under what conditions the answer flips.

That discipline shows up or breaks down at four specific moments:

When these moments are skipped because of time pressure, overconfidence in tools, or a culture that rewards analytical speed over decision rigor the gap between analysis and action grows. The Powerade and Little Rock situations were both failures at these moments, not failures of the models themselves.

*DeCesare, M. (2009). Casting a critical glance at teaching “critical thinking.” Pedagogy and the Human Sciences, 1(1), 73–77.

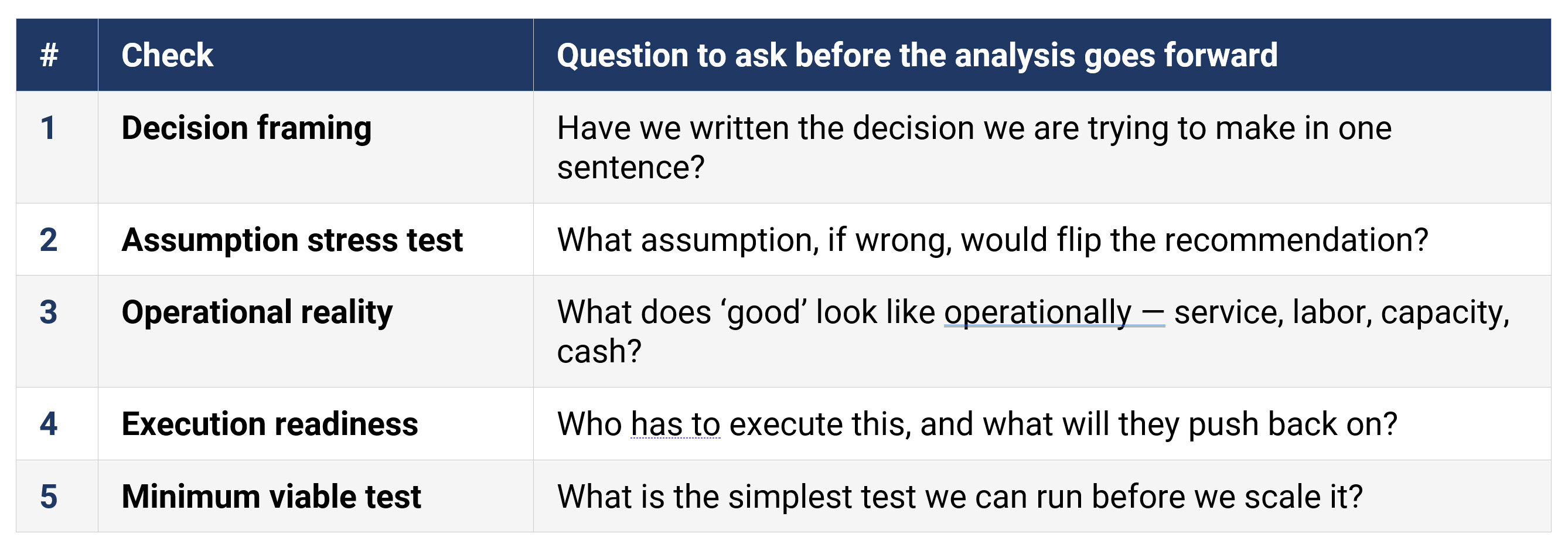

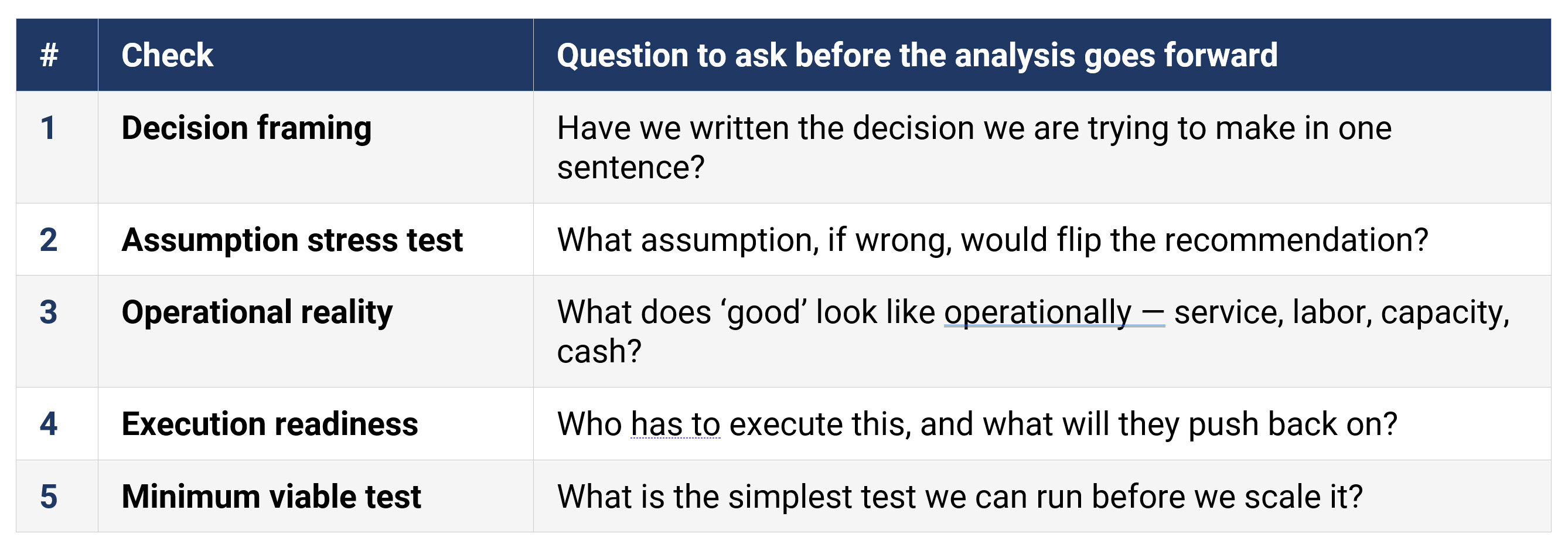

Before an analysis or recommendation moves forward, teams should be able to answer five questions clearly. If any of them cannot be answered, the analysis is not ready—regardless of how strong the model is.

Figure 1: A Five-Question Diagnostic (accessible version)

These are questions that should have specific, grounded answers before a recommendation reaches leadership. If the team cannot answer question two (what assumption would flip the result) then the recommendation rests on unexamined ground. If question four cannot be answered, the change management work has not started yet.

In the Powerade situation, questions one and two were the misses. In Little Rock, it was question three. The models were not the problem. The diagnostic would have surfaced both gaps before the decisions were made.

What I am describing from my own experience is consistent with what the research shows.

A long-running finding in operations research is that many models are built and comparatively few actually drive decisions, and the breakdown is organizational, not technical. A widely cited review in the European Journal of Operational Research frames this as an implementation problem rooted in how models are connected (or not connected) to the people and processes that own the decision.

Professional credentialing bodies have recognized the same gap. The INFORMS Certified Analytics Professional blueprint explicitly lists business problem framing, stakeholder analysis, and business case development as core analytics competencies—not optional additions. The signal is clear: being analytically strong is necessary but not sufficient.

On the training side, a field study published in the European Journal of Operational Research tested the effects of structured decision training across roughly 1,000 decision makers and analysts. The results showed measurable improvement in proactive decision-making skills and decision satisfaction. The gap is real, and it is addressable. It is a training and design issue, not a talent issue.

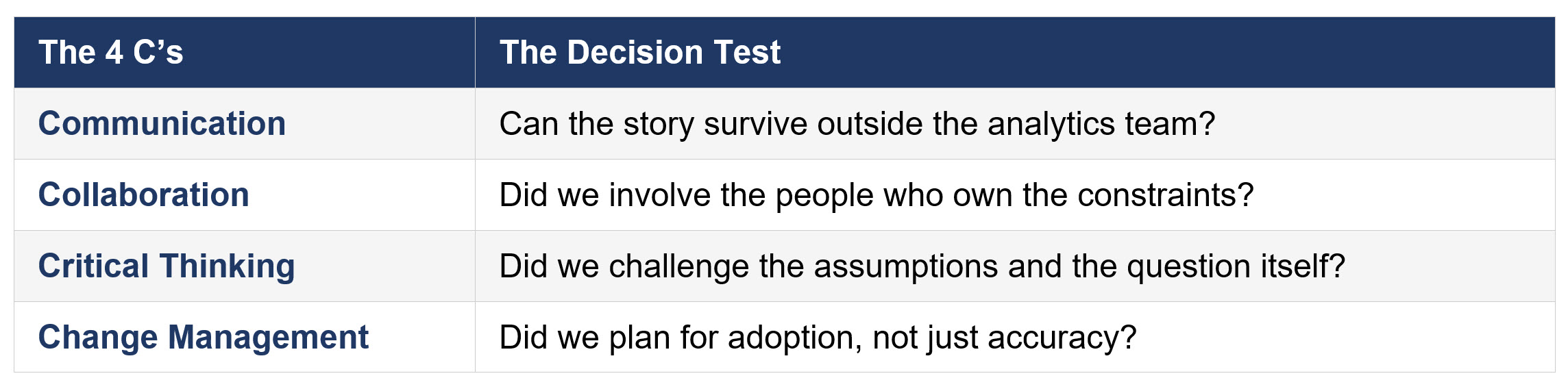

At Georgia Tech SCL, we organize this thinking around what we call the 4 C’s. These soft skills play a key role in the decision process. Each one asks a specific question about whether the decision, not just the analysis, was made well.

Figure 2: The 4 C’s: A Decision-Focused Framework (accessible version)

Notice what this framework does not include: model accuracy, data quality, or visualization quality. Those matter, and they are inputs to the decision. But a team can have a perfect model, a clean dataset, and a compelling dashboard and still fail all four of these tests.

The Powerade situation failed the Collaboration test The supply chain team did not sufficiently interrogate the brand team’s assumptions. Little Rock failed the Critical Thinking test: the right question was not asked about what the model was not capturing. In both cases, the Communication and Change Management failures followed directly from those upstream gaps.

When all four are present, analysis becomes a decision. When one or more is missing, the analysis and translation to a solid recommendation are at risk.

This topic keeps coming up in conversations with companies, in work with practitioners, and in what we hear from students as they move into industry roles.

The tools are not the problem. AI-assisted analytics, optimization models, and advanced forecasting are real assets. But tools amplify the thinking behind them. Weak decision discipline and better tools is a faster path to the wrong answer.

If this shows up in your org, try the five-question diagnostic on your next recommendation before it hits leadership. If it surfaces gaps you cannot close quickly, SCL can help. We are building workshops and courseware on decision-focused critical thinking, and we will cover this in our June Lunch and Learn.

Questions or comments? Reach out to SCL.

]]>